I am currently a staff engineer at Momenta, where I focus on autonomous driving technologies, including obstacle perception, world model development, and end-to-end solutions. Previously, I worked on perception systems at KargoBot and DiDi, specializing in 3D object detection, occupancy prediction, and data fusion. Before that, I was an algorithm engineer at Aibee, working on face recognition, image retrieval, and re-identification.

I completed my Master's degree in Industrial Engineering at the University of Washington, Seattle. Prior to that, I received my Bachelor's degree in Mechanical Engineering from the University of Science and Technology of China, with a GPA ranking of 3rd out of 61 students.

E-mail | Curriculum Vitae | Publications | Github

- 03/2026: Our paper "GEM: Generating LiDAR World Model via Deformable Mamba" was accepted by CVPR 2026.

- 11/2025: Our paper "Sparse Annotation, Dense Supervision: Unleashing Self-Training Power for Occupancy Prediction With 2D Labels" was accepted by RA-L 2025.

- 09/2025: Our paper "COME: Adding Scene-Centric Forecasting Control to Occupancy World Model" was accepted by NeurIPS 2025.

- 09/2024: Our paper "OPUS: Occupancy Prediction Using a Sparse Set" was accepted by NeurIPS 2024.

- 07/2024: Our paper "Towards Stable 3D Object Detection" was accepted by ECCV 2024.

- 02/2023: Our paper "Curricular Object Manipulation in LiDAR-based Object Detection" was accepted by CVPR 2023.

- 04/2022: Invited to give a talk at Beijing Jiaotong University.

- 03/2022: Invited to give a talk in ICLR 直播分享会 organized by ReadPaper.

- 01/2022: Our Paper "Improving Federated Learning Face Recognition via Privacy-Agnostic Clusters" was accepted by ICLR 2022 as a SPOTLIGHT paper.

- 11/2021: Invited to give a talk in the workshop on face image quality organized by EAB, DHS-OBIM, NIST, eu-LISA, etc.

- 07/2021: Our paper "Learning Compatible Embeddings" was accepted by ICCV 2021.

- 06/2021: Invited to give a talk about MagFace in CVPR 论文分享会 organized by 机器之心.

- 03/2021: Our paper "MagFace: A Universal Representation for Face Recognition and Quality Assessment" was accepted by CVPR 2021 as an ORAL paper.

- 12/2020: Our paper "Searching for Alignment in Face Recognition" was accepted by AAAI 2021.

My research interests lie in computer vision, deep learning and optimization. Representative works are highlighted.

Yang Wu, Zhaojiang Liu, Qiang Meng, Youquan Liu, Renliang Weng, Jianjun Qian, Jian Yang, Jin Xie

CVPR, 2026

paper | code

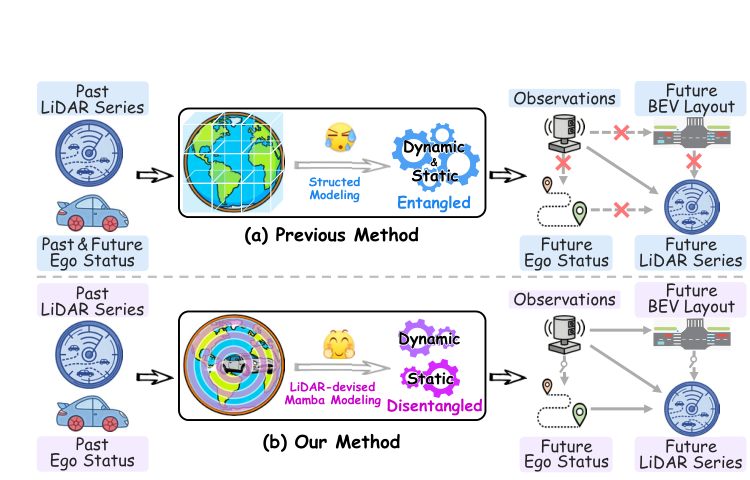

GEM is a generative LiDAR world model that leverages deformable Mamba architecture with dynamic-static disentanglement to achieve state-of-the-art LiDAR scene generation and autonomous rollout.

Yining Shi, Kun Jiang, Qiang Meng, Ke Wang, Jiabao Wang, Wenchao Sun, Tuopu Wen, Mengmeng Yang, Diange Yang

NeurIPS, 2025

paper | code

COME is an occupancy world model that integrates scene-centric forecasting control for spatially and temporally coherent results.

Jiabao Wang*, Zhaojiang Liu*, Qiang Meng, Liujiang Yan, Ke Wang, Jie Yang, Wei Liu, Qibin Hou, Ming-Ming Cheng

*equal contribution

NeurIPS, 2024

paper | code

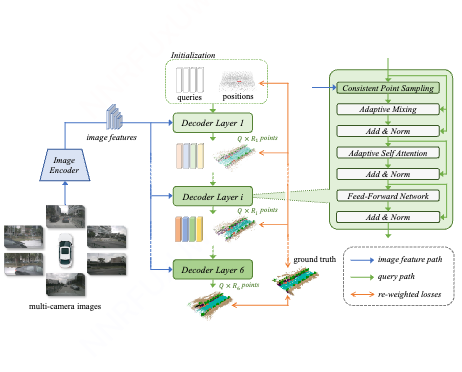

OPUS is an fully sparse and end-to-end framework for occupancy prediction. It utilizes a transformer encoder-decoder architecture to simultaneously predict occupied locations and classes using a set of learnable queries.

Jiabao Wang*, Qiang Meng*, Guochao Liu, Liujiang Yan, Ke Wang, Mingming Cheng, Qibin Hou

*equal contribution

ECCV, 2024

project page | paper | code

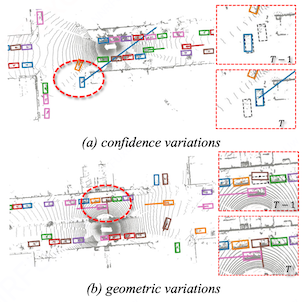

This paper designs a novel stability index (SI) to evaluate the stability of 3D object detection models, and proposes a strong baseline for stability improvement.

Qiang Meng, Xiao Wang, JiaBao Wang, Liujiang Yan, Ke Wang

arXiv, 2024

paper

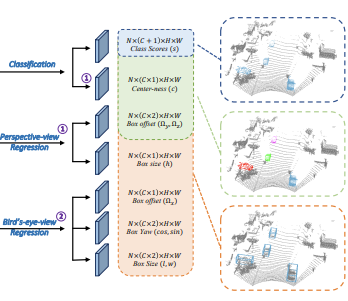

The Small, Versatile, and Mighty (SVM) framework is a range-view-based perception system which can perform object detection, semantic segmentation and panoptic segmentation.

Ziyue Zhu*, Qiang Meng*, Xiao Wang, Ke Wang, Liujiang Yan, Jian Yang

*equal contribution

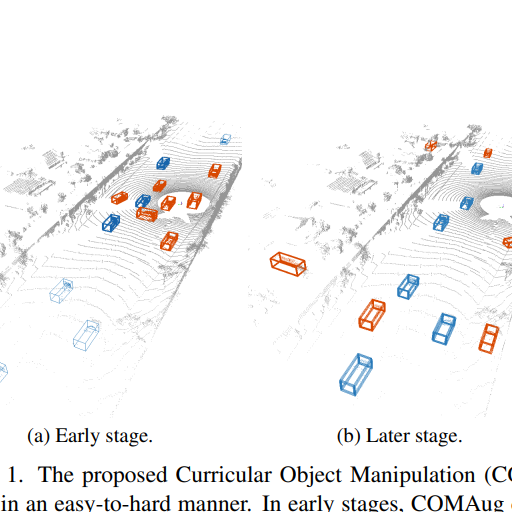

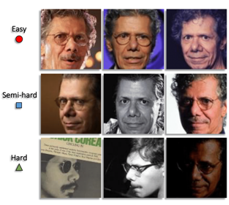

CVPR, 2023

paper | code

Curricular object manipulation (COM) is a framework for LiDAR-based object detection that incorporates the easy-to-hard training strategy into both loss design and augmentation process.

Qiang Meng, Feng Zhou

arXiv, 2022

paper | code

SecureVector is a plug-in module designed to accomplish real-time and lossless feature matching among sanitized features, offering significantly higher security levels compared to current state-of-the-art solutions.

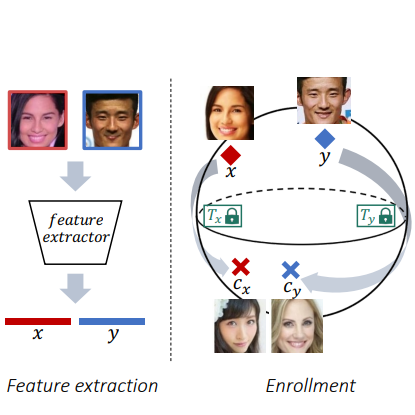

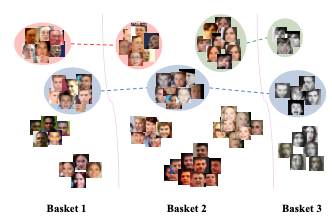

Qiang Meng, Feng Zhou, Hainan Ren, Tianshu Feng, Guochao Liu, Yuanqing Lin

ICLR , 2022 (Spotlight)

project page | paper | 知乎

A pragmatic framework that markedly enhances the performance of federated learning in face recognition while ensuring privacy guarantees. Key components encompass a meticulously crafted differentially private local clustering mechanism and a recognition loss that is consensus-aware.

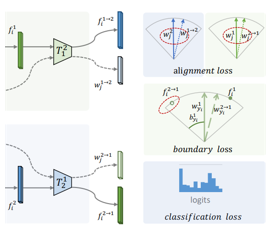

Qiang Meng, Chixiang Zhang, Xiaqiang Xu, Feng Zhou

ICCV, 2021

project page | paper | 知乎 | short video | code

A general framework (LCE) that is applicable for both cross model compatibility and compatible training in direct, forward, and backward manners.

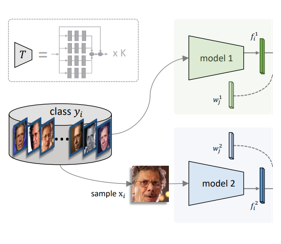

Qiang Meng, Shichao Zhao, Zhida Huang, Feng Zhou

CVPR, 2021 (Oral presentation)

project page | paper | 知乎 | short video | code

A novel loss which equips feature magnitudes with the ability to represent face qualities, as well as achieves better performances on face recognition and clustering. Remarkably, no additional labels are required!

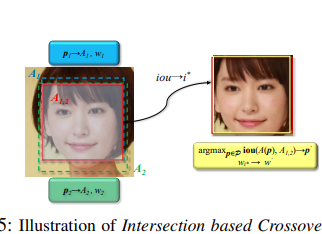

Qiang Meng, Xinqian Gu, Xiaqing Xu, Feng Zhou

arXiv, 2022

paper

A simple but effective mining-during-training strategy that enables models to be trained in an end-to-end fashion on multiple datasets.

Xiaqing Xu, Qiang Meng, Yunxiao Qin, Jianzhu Guo, Chenxu Zhao, Feng Zhou, Zhen Lei

AAAI, 2021

paper | 知乎

We design a face template searching space with decomposed crop size and vertical shift, and propose the Face Alignment Policy Search (FAPS) to find optimal alignment templates for face recognition.

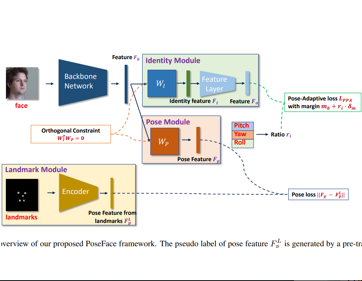

Qiang Meng, Xiaqing Xu, Xiaobo Wang, Yang Qian, Yunxiao Qin, Zezheng Wang, Chenxu Zhao, Feng Zhou, Zhen Lei

arXiv, 2021

paper

An efficient large-pose face recognition method that leverages facial landmarks to disentangle pose-invariant features and incorporates a pose-adaptive loss to dynamically address the imbalance issue.

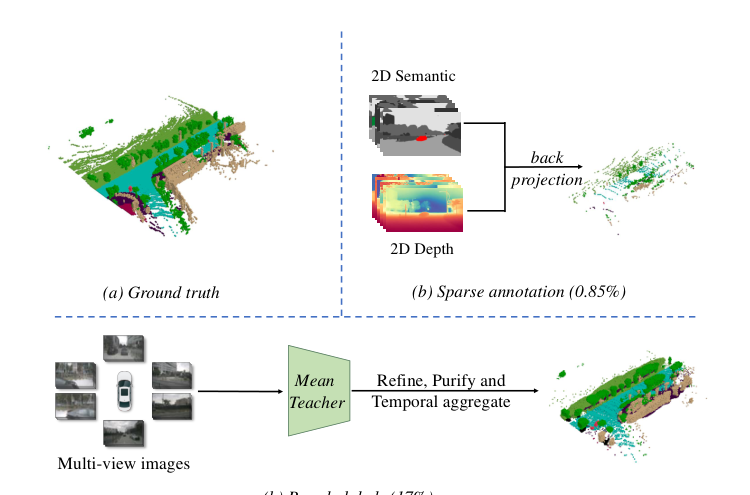

Zhaojiang Liu, Zhipeng Zhang, Qiang Meng, Yishu Wang, Dianmin Zhang, Liujiang Yan, Ke Wang, Zhonglong Zheng, Jie Yang, Wei Liu RA-L, 2025

paper

A self-training framework for 3D occupancy prediction that leverages a mean teacher with temporal aggregation to generate dense pseudo-labels from sparse 2D annotations, achieving performance comparable to fully supervised methods.